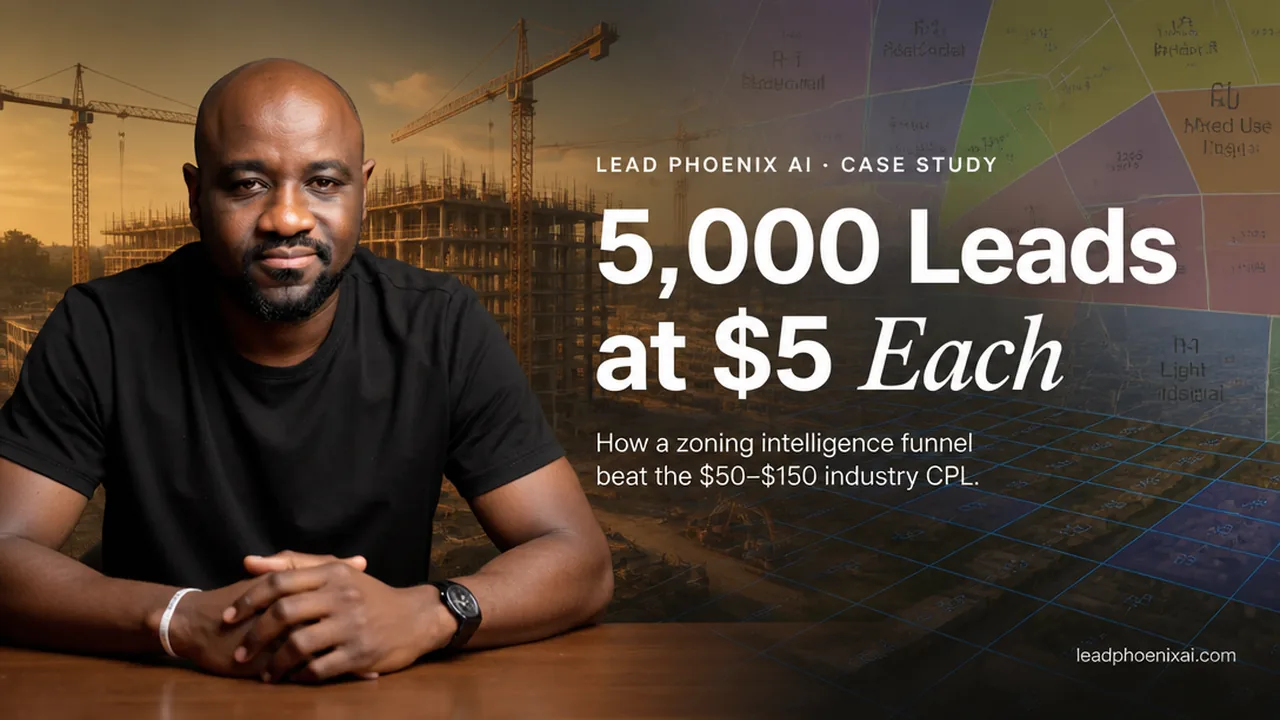

How One Construction Firm Turned Open Zoning Data Into 5,000 Leads at $5 Each

We built a funnel on top of open zoning data and generated 5,000 construction leads at $5 each. Here is the build and where the pattern generalizes.

We built an AI lead generation engine tied to a city's open zoning data. It generated approximately 5,000 leads at around $5 each for a mid-market construction firm. The interesting part is not the numbers. It is that the same pattern is sitting in plain sight inside almost every mid-market vertical, and almost no one is using it.

This post is the walk-through: the before, the build, the KPI move, and where else this generalizes.

The before

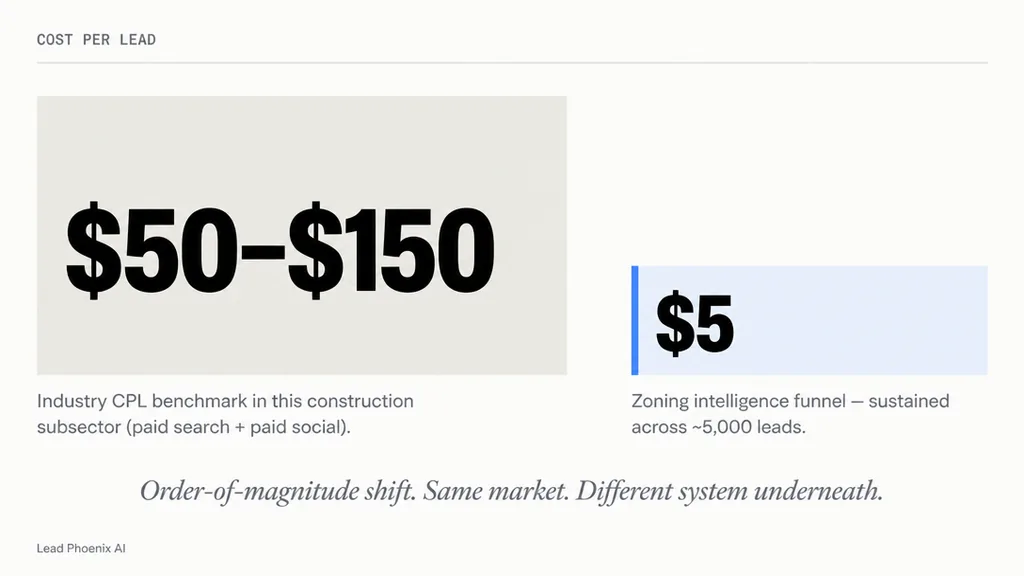

The client was a mid-market construction company with a strong local reputation and unpredictable demand. They had service capacity, market knowledge, and a sales team. What they did not have was a reliable way to manufacture demand without leaning on referrals or running standard search-and-social ads against a saturated set of construction keywords. Cost per lead through conventional channels in their subsector ran somewhere between $50 and $150 depending on the campaign. That made paid acquisition a luxury, not a system.

The team had also tried the obvious AI move — using ChatGPT to spin out blog posts and ad copy. It produced output, not leads. The problem was not the writing. The problem was that the writing was unanchored to anything specific that mattered to a real property owner this month.

The insight

The most useful AI in this engagement was not generative. It was connective.

Zoning information is public. Every Canadian municipality and most American ones publish updates to land-use bylaws, secondary plans, and minor variances. That data is technically accessible and practically unreadable. A homeowner with a corner lot in a newly rezoned R4 area has no idea the rule change just made their property eligible for a triplex. A small commercial developer has no easy way to scan which streets quietly went from C1 to mixed-use last quarter. The information exists; the layer that translates it into "this affects you, this week, in this specific way" did not.

Turning public-but-unreadable data into prospect-facing intelligence is a workflow. That workflow is what we built.

What we built

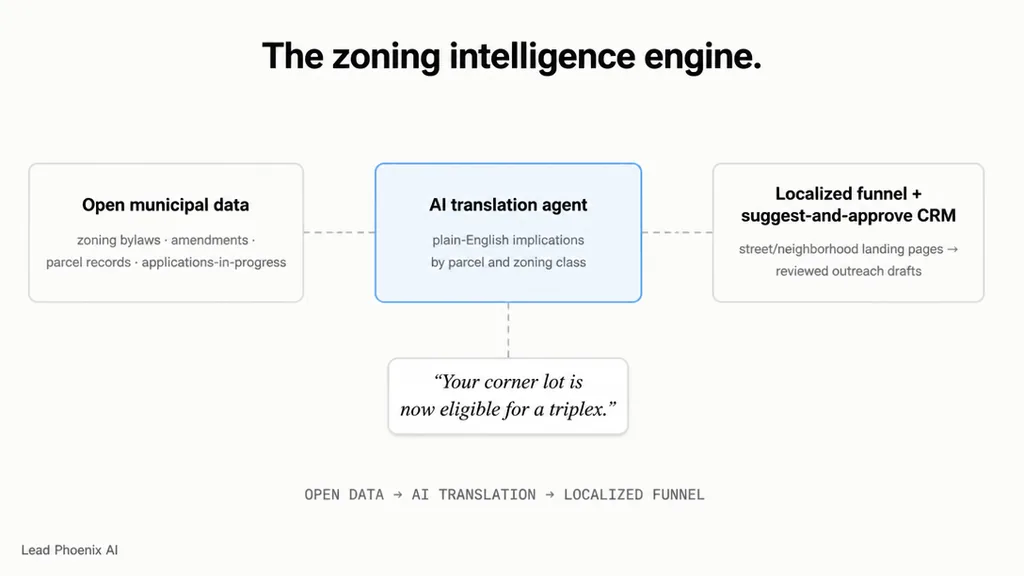

We built three pieces, stacked in the order that matters: a data connection layer, an AI translation agent, and a localized funnel with suggest-and-approve CRM follow-up.

One — the data connection. We pulled feeds from city and provincial open-data portals: zoning bylaws, recent amendments, applications-in-progress, and parcel-level changes. None of this is exotic; municipalities publish it on purpose. The work was the plumbing. We standardized the inputs into a single layer the rest of the system could read from.

Two — the AI translation layer. An agent reads the raw municipal data and produces plain-English implications by parcel and zoning class. It does not write generic content. It answers a specific question for a specific property profile: given this zoning change in this neighborhood, what can a property owner now build that they could not build before? The output is localized, dated, and operationally useful. A homeowner can read it and immediately see whether they should care.

Three — the funnel and follow-up. That translated content became the spine of an AI-generated website and lead funnel. Visitors searching for their street, neighborhood, or property type landed on pages that already knew what was true for their parcel. Lead capture was tied to a CRM with suggest-and-approve follow-up, so the construction team received prepared outreach drafts to review rather than a raw lead list to chase.

Notice that none of these three pieces is "use AI to write more ads." Each one is infrastructure. The campaign was the visible part; the data layer underneath it is why the campaign worked.

The KPI move

The KPI was cost per lead — industry benchmark in this construction subsector is $50 to $150 through paid search and paid social, and the funnel hit approximately $5 per lead across roughly 5,000 leads of volume. Two reasons that number was reachable, and neither has anything to do with the AI being clever.

First, the content was specific in a way ad platforms reward. Pages tied to actual streets and zoning classes had high relevance, low cost-per-click, and high on-page conversion. Search engines surfaced them naturally because they were the only useful answer to the underlying query.

Second, the funnel converted at a higher rate than a generic construction landing page because visitors arrived already informed about something specific to them. The friction between "I read this article" and "I would like a quote" was lower than the standard ad-to-form gap.

The combined effect was an order of magnitude lower cost per lead than the industry average, sustained across thousands of leads. That is not a fluke. That is what happens when a system is built around real, timely information instead of generic ads.

Where this generalizes

The pattern is portable to any vertical with public-but-unreadable data plus a private pain someone in the market would pay to solve. AI sits in the middle as the translator. The funnel captures the demand that translation creates.

A few obvious adaptations:

- CPA firms. State and provincial regulatory updates, IRS and CRA bulletins, and small-business filing changes are public, dense, and unread. A mid-market accounting firm could run a "what changed for your business this quarter" funnel that drives advisory consultations.

- Law firms. Court filings, corporate registry changes, and bankruptcy notices are public. A litigation, M&A, or restructuring practice could surface relevant events for the firms that should care.

- PE operators. Portco financial filings, sector M&A activity, and supplier-level data are public or scrapable. A sector-focused fund could turn that into ongoing intelligence for its operating partners.

- Architecture and engineering firms. Permit data and infrastructure planning announcements are public. The same translation layer that worked for property owners works for upstream design clients.

The construction zoning play is one expression of a pattern that exists everywhere. The reason it does not show up everywhere is that the data plumbing is unglamorous, and most consultants skip past it on the way to demoing the next agent.

The LeadPhoenix take

When we talk about lead generation as an AI workflow, this is what we mean. Not "use ChatGPT to write more posts." Not "automate your outreach." We mean: build the source-of-truth layer that connects public information to private pain, run an agent that translates the connection into prospect-facing intelligence, and let the funnel compound.

The same kind of translation layer that surfaces zoning changes for property owners outside the firm can surface RFI patterns inside Procore for the project team. Public-data engine on the outside; source-of-truth engine on the inside. Same pattern, different direction.

The firm that ran this campaign did not hire a marketing department to do it. They had a fractional AI operator embedded for the work. By the time the campaign was running, the system was producing leads on a cadence the team could service, and the cost per lead was low enough that the math worked even after factoring in close rates and project margins.

If you operate a mid-market firm in a vertical that touches public data — and almost every vertical does — there is a version of this engine for your market. The work is mapping which open data feeds matter, which private pain converts, and what the translation layer between them needs to do.

If you want a structured look at the pattern for your firm, that is exactly what an AI Readiness Audit is built to do — find the data the market is already publishing, find the pain it would solve, and design the engine that connects them.

Frequently Asked Questions

What does this case study generalize to for CPA, law, or PE firms?

The pattern is the same in any vertical with public-but-unreadable data and a private pain. CPA firms can run a "what changed for your business this quarter" engine on regulatory bulletins. Law firms can surface court filings and registry events for the firms that should care. PE operators can turn portco filings and sector M&A activity into ongoing intelligence for operating partners.

How is this different from hiring a marketing agency that uses AI tools?

An agency typically generates more campaign assets faster — more ad copy, more blog posts. The engine in this case study did the opposite: almost all the AI work was on the data side, not the content side. The campaign worked because the underlying intelligence layer turned public data into prospect-level relevance, which is something a creative agency cannot ship without operator-level data plumbing.

How long does an engine like this take to build?

The data plumbing and translation layer take roughly 30 to 60 days to stand up. The funnel itself can ship in parallel within the same window. Lead volume builds gradually for the first 30 days as search engines index the localized content, then compounds as the system keeps publishing fresh property-level intelligence.

What if we already have ChatGPT licenses and a marketing team?

Most teams that have tried generic AI marketing have already discovered that ChatGPT-generated content alone does not move the pipeline. The missing piece is the connection between public data and prospect pain. The marketing team can run the campaign and creative; the operator-level work is mapping which open data feeds matter and building the translation layer that anchors the campaign to real, timely information.

How does the suggest-and-approve CRM follow-up actually work?

Each captured lead triggers an agent that drafts a property-specific outreach message — referencing the zoning change, the prospect's location, and the implied opportunity. The draft routes to a human reviewer on the construction team. The reviewer approves, edits, or rejects. Approved messages send and the system learns from edits over time. The team gets the speed of automation without losing the judgment of a real responder.